Jennifer Clinehens, the author of CX That Sings and Choice Hacking, whose an expert in customer behavior psychology and the Head of Experience at The Marketing Store – an agency that focuses on customer experience, joined the Feedback Tribe for an energetic round of Ask Me Anything.

Here are the top 10 liked questions. To read the full Q&A, join the Feedback Tribe slack group.

Q: What’s a good cadence for doing Customer Journey Mapping sessions? And who should you invite or shouldn’t invite?

Jennifer: It really just depends on the urgency of the project and the depth of the Customer Journey Map / how much data, many touchpoints etc there are to work through. A good cadence for pulling in stakeholders is probably every other week, but always depends on dairies, availabilities and the size of the CJM team as well as the org. Need to leave a little room in there to do supporting research and for stakeholders to get their “day jobs” done!

As for the attendees, you have to know the players, and having someone in a workshop who likes to shut down every session with negativity or is too actively judging ideas is probably not an ideal participant. And you also have to balance seniority in a smart way – too many senior people and they don’t know what customer are actually doing (and some junior folks might get anxious about speaking truths). Too few and you won’t get the right politcal buy-in for people to adopt the journey map internally – a mix of organizations, functional areas and seniority (with a large dose of “excited to be there” and “passionate about the customer” always helps).

Q: How can you effectively conduct a CJM session with all participants being remote?

Jennifer: I’ve done CJM sessions remotely and in-person even before the pandemic and what I’ve found that works well is a combination of factors.

- Brainstorming should be done both before the session and during, which is well suited to IRL and remote journey mapping sessions

- Ideally there’s a good screenshare and/or whiteboard collaboration tool so everyone can see what’s happening at the same time (so Miro might be an example – or Teams could work well)

- It’s a small thing but get people to turn on their cameras! There tends to be more active participation when people feel accountable (aka people can see you!) and don’t be afraid to ask people to respond, as remote workshops can be intimidating for people to jump into (and with different communication styles) – we want everyone to participate and have a voice!

Q: About the peak-end rule, does it mean for every session or the overall customer journey?

Jennifer: Peak-end tends to refer to a session, so example visiting a restaurant or one session of using a digital product. But it can also mean an overally experience if you’re using multiple touchpoints in more of an overall journey. So for example, if I visit a Nike store and they don’t have my size shoe, but the sales associate uses their tablet, goes online and sends them to my house in an hour, that would be a total experience.

A good rule of thumb is know where your peaks (or troughs!) are and where your “ends” usually are for customers. Depending on your product they might not be where you assume. Asking customers is a good way to get a steer on that as well.

Q: How to find out peaks? Would you let customers rate different moments or straight up ask for their peaks and ends?

Jennifer: Autonomic is probably the way to go (combined with qualitative and a little common sense) – they’re actually more likley to know where their worst/trough of a bad experience is much more clearly than their peak. But the idea of Peak-end is their memories are based off of two points, not the total experience. As with most things, it’s a little bit of art and a little science.

Q: You talked about “moments of truth” in CX that Sings, why are they important? What should we do with them?

Jennifer: Moments of truth tend to be where opinions are formed and decisions are made – if we think back to Peak-end, these are most likely to be the source of that peak (or trough). MoT are also helpful when it comes to prioritizing CX work – we know where to concentrate our efforts. In other words, not all touchpoints and moments in the journey are created equal – which I think is a key concept to embrace in terms of prioritization, funding, effort and resourcing.

Q: We know within our audience there are different people with different needs, how can we make sure we create an experience that is great for a variety of people?

Jennifer: This is common issue for companies putting together journey maps. In the past I’ve worked with brands that either create totally seperate maps or who separate out different audiences on the same map (so for ASDA or Walmart for example, you could have Families and Single Shoppers on the same map).

Usually when this seperation out of audiences happens on the same map, it can get confusing. I’m a proponent of “one customer one map” as it makes communications easier (the whole point of a customer journey map!)

I think in this case it depends on the product as well – with a digital product, you could seperate out landing pages and user paths it’s almost two seperate experiences. If that’s not possible, making sure you are aware of your one main audience (usually from a margins and cost of acquision perspective) who “wins” if there’s a moment in the journey where you can’t find a good middle ground.

Q: How often should you conduct CJM in your company?

Jennifer: It depends. A fast moving, fast evolving product and/or customer means more frequent journey mapping. A bigger, slower more traditional company might be able to get away with once every 2-3 years (which I wouldn’t recommend). I believe once a year to gut-check the map is a good rule of thumb but again YMMV.

Q: I assume that for a good CJM you need both qualitative and quantitative research and data. What is you take on the quantitative side, how should we integrate that?

Jennifer: So I’m a big believer in quantitative analysis – I think you have to look at what people do. Because as the famous saying goes: “What people say, what people do, and what they say they do are entirely different things.” BUT you can’t always tell a complete story with quant. If you’ve got behavioral data from a website for instance, it can tell you a lot about what people love on your site and what they’re interacting with and for how long, but any hypothesis on WHY will be speculation until it’s tested. Even autonomic data can tell you that people’s hearts are beating faster and they’re sweating, but it can’t tell you if that’s good or bad!

There’s other ways to use quant as well, for instance knowing how many people are calling support and their reasons why – so again, it just depends on what you’re trying to get at, but it will tell you a lot about how people are using individual touchpoints and what their potential issues and pain points might be.

Q: Can you tell us a bit more about the Think – Do – Stop – model?

Jennifer: So I created this model to unify the “middle” section of the Customer Journey Map. Often this section can become a laundry list of moments, I wanted to get people thinking about this as a 360 view of the same moments in the journey – what are people trying to do, what’s stopping them, and what do they think about that. I’ve found in the past that people weren’t putting enough thought into customer pain points and what they were trying to accomplish, and insteading concentrating on what they were saying and/or thinking.

Q: What do you think about chatbots, where in the customer journey should they sit?

Jennifer: Chatbots can be a blessing and a curse. As someone who works with AI/Machine Learning etc. a lot I often use the rule of thumb that “people and machines are better together”. I think that many executives have used chatbots as a catch all cost savings measure without considering the customer experience of being frustrated and unable to get answers. In those cases, I do think chatbots can work much like IVR in call centers – by answering common questions but quickly sorting to a human if/when that’s needed.

Transparency is key here – there was a great tweet I saw the other day from someone saying that the fake thinking/typing bubbles on chatbots drove them crazy because it was clearly a bot – the person felt the company was treating them like they were too stupid to figure out this was a bot!

So to answer your question – we need to consider the balance of cost savings/automation and the customer experience. And the fact that to many customers, talking to a person is the only interaction they’d like to have. As we test and develop chatbot functionality, I think customers will become more comfortable with chatbots, the chatbot quality will increase, and therefore they’ll become more useful and univerally applicable over time.

Join the Feedback Tribe for more close up sessions with Customer Experience experts!

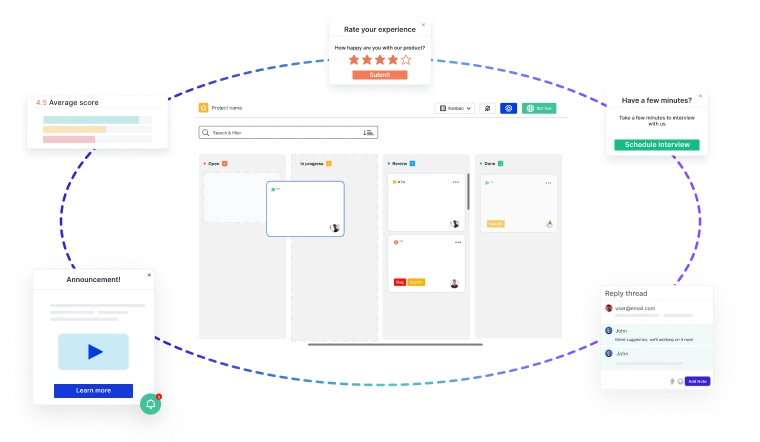

Close the Feedback Loop with Actionable Insights

Building great products starts with customer feedback at every stage of your

Product Development Lifecycle (PDLC)

- 🚀 Capture insights effortlessly—from feature discovery to post-launch improvements.

- 📊 Turn feedback into decisions—prioritize requests, track issues, and refine the user experience.

- 🔄 Iterate faster—validate ideas, reduce friction, and keep customers engaged.

Usersnap helps you collect, manage, and act on feedback—seamlessly.

Sign up today or

book a demo with our feedback specialists.