Resilience testing belongs to the category of “non-functional testing” and tests how an application behaves under stress. Due to increasing consumer demands, resilience testing is as important as never before.

That’s why companies like Cisco are taking resilience testing very seriously, with 75% of all of Cisco’s applications tested for resilience as of mid-2016.

What is software resilience testing?

Software testing, in general, involves many different techniques and methodologies to test every aspect of the software regarding functionality, performance, and bugs.

Resilience testing, in particular, is a crucial step in ensuring applications perform well in real-life conditions. It is part of the non-functional sector of software testing that also includes compliance testing, endurance testing, load testing, recovery testing and others.

As the term indicates, resilience in software describes its ability to withstand stress and other challenging factors to continue performing its core functions and avoid loss of data.

Or as defined by IBM: “Software solution resiliency refers to the ability of a solution to absorb the impact of a problem in one or more parts of a system, while continuing to provide an acceptable service level to the business.”

Since you can never ensure a 100% rate of avoiding failure for software, you should provide functions for recovery from disruptions in your software. By implementing fail-safe capacities, it is possible to largely avoid data loss in case of crashes and to restore the application to the last working state before the crash with minimal impact on the user.

One way of improving the resilience of software and solutions is by hosting them on cloud servers, thus minimizing the chance of failures to the internal system and choosing a much more resilient cloud architecture. While disruptions do occur on the cloud level as well, the cloud operators usually have sophisticated resilience and recovery systems in place.

Examples of how software resilience testing is done

Resilience testing at Netflix

A great example of how resilience testing can be done successfully on cloud level is Netflix and its so-called Simian Army. Even though all of the Netflix services are hosted on Amazon Web Services’ state of the art cloud servers with cutting edge hardware, the company realized that the sheer scale of their operations makes failures unavoidable.

To prepare for these failures, Netflix developed their own tool to create random disruptions to the system and tested it for resilience. The tool was designed to simulate “unleashing a wild monkey with a weapon in your data center (or cloud region) to randomly shoot down instances and chew through cables ” and was aptly called Chaos Monkey.

By identifying weaknesses in their systems, Netflix can then build automated recovery mechanisms to deal with them should they occur again in the future.

The tool is run while Netflix continues to operate its services, although in a controlled environment and in ideal time frames. By only running Chaos Monkey during US business hours on weekdays, the company ensures that their engineers will have the maximum capacity for dealing with the disruptions and that server loads are minimal compared to peak consumer usage times.

After early successes, Netflix quickly developed additional tools to test other kinds of failures and conditions. Among these tools were Latency Monkey, Conformity Monkey, Doctor Monkey and others, collectively known as the Netflix Simian Army.

Resilience testing with the Simian Army has since become a popular approach for many companies, and in 2016 Netflix released Chaos Monkey 2.0 with improved UX and integration for Spinnaker.

Resilience testing at IBM

To get an idea of how companies react to different kinds of failures, we can look at how resilience testing is done at IBM. The team at IBM has identified two significant components of resiliency, the problem impact and the service level that is considered acceptable once the problem occurs.

Ideally, any failure would have no impact at all on the consumer. Since that is impossible to achieve, IBM focuses on minimizing that impact as much as possible. If a machine that is hosting the system or one its components crashes, for instance, the requests on their way to that machine get redirected to another machine instantly and as transparently as possible to the users.

A more dramatic event would be the failure of an entire data center, in which case “all the work that was being processed by that data center is continued by another data center – again as transparently as possible to the users, although in the event of a catastrophic outage you should be prepared for a significant impact.”

The goal at IBM is to minimize the impact and duration of failures. For a machine failure, this duration is usually measured in minutes, while a failure in a data center could cause disruptions of several hours.

To come up with meaningful resiliency test cases, IBM uses the solution operational model where all the components of the solution to the problems as well as their interactions are identified. They then look at solution non-functional requirements to create a list of requirements to the solution such as response time, throughput and availability.

Wrapping it up.

With consumer expectations increasing, it is vital to ensure minimal disruptions to any service or software that enters the market these days. While cloud hosting can go a long way in minimizing failures, resilience testing should still make up a significant part of overall software testing.

There are many different approaches for resilience testing. Using chaos engineering and the Netflix Simian Army can help discover unusual problem sources and potential weaknesses in the system’s architecture. It requires capacities for controlled testing though, and for many companies, a more structured and theoretical approach like the one used by IBM makes sense.

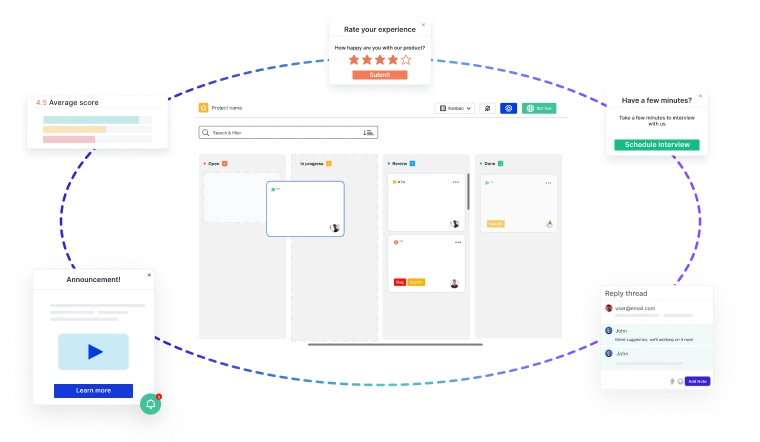

Close the Feedback Loop with Actionable Insights

Building great products starts with customer feedback at every stage of your

Product Development Lifecycle (PDLC)

- 🚀 Capture insights effortlessly—from feature discovery to post-launch improvements.

- 📊 Turn feedback into decisions—prioritize requests, track issues, and refine the user experience.

- 🔄 Iterate faster—validate ideas, reduce friction, and keep customers engaged.

Usersnap helps you collect, manage, and act on feedback—seamlessly.

Sign up today or

book a demo with our feedback specialists.